Falcon Perception

Next-generation multimodal model that unifies vision and language in a single dense transformer architecture

Advanced perception capabilities, combining image and text understanding in one framework.

Processes images and text together from the very first layer. Identify objects, highlight parts of an image, or read text from documents in one model.

Powerful. Efficient. Practical to deploy.

overview

Falcon Perception is a multimodal AI model that enables systems to see, read, and understand images using natural language prompts.

By combining vision and language capabilities in a single architecture, Falcon Perception simplifies how AI interprets visual information while remaining efficient.

A Unified Approach to Visual Understanding

Falcon Perception extends the Falcon ecosystem beyond language models into advanced visual perception. It is designed to handle tasks such as object grounding, image segmentation, and document understanding within a single model.

Traditional vision languge systems are often built as pipelines, with separate components for image encoding, language fusion, and task-specific outputs. Falcon Perception takes a simpler approach, using a single early-fusion transformer that processes image and text information together from the first layer.

This avoids many of the bottlenecks and added complexity found in modular systems, making the model easier to scale and more efficient to run.

One Model,

Multiple Visual Tasks

Falcon Perception is built to work across a range of perception tasks using a unified structure. It supports open-vocabulary perception, meaning users can interact with images using natural language prompts rather than relying on fixed label sets. In practice, that allows the model to identify objects from written descriptions, highlight the correct regions in an image, and interpret text within documents using one shared architecture.

The model uses a lightweight structured output process to move from object location to object size to segmentation, allowing it to handle variable numbers of objects in an image without the overhead of heavier decoder-based systems.

Built for Practical Deployment

Falcon Perception is designed for practical use across domains including document analysis, medical imaging, satellite imagery, robotics, and autonomous systems. Its combact architecture is intended to deliver strong multimodal performance without the size and infrastructure demands typically associated with large vision-language systems.

Medical image interpretation

Satellite and geospatial imagery

Robotics and autonomous systems

Competitive Performance at Compact Scale

Despite its compact size, Falcon Perception delivers strong results across perception benchmarks. With a 0.6B-parameter early-fusion model for segmentation and grounding, and a 0.3B model for document understanding, it delivers a strong multimodal performance without the scale typically required by vision-language systems.

benchmark

Despite its compact size, Falcon Perception demonstrates strong performance across leading benchmarks:

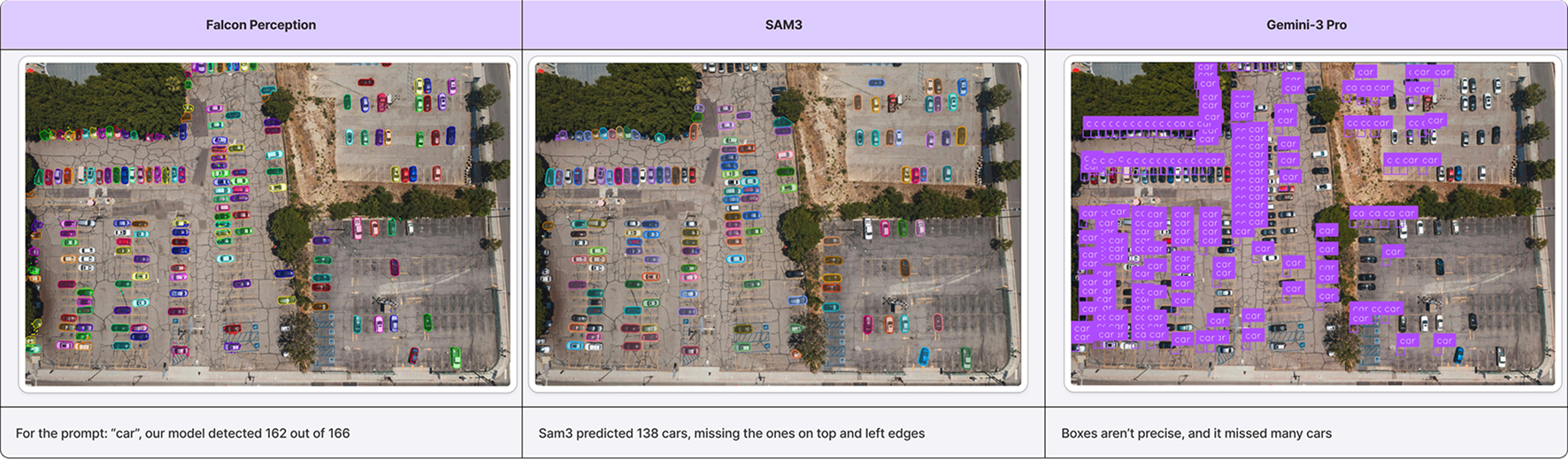

- Segmentation: Matches state-of-the-art results from leading models such as Meta’s SAM3 on the SaCO benchmark for object segmentation.

- Document understanding: Achieves competitive results on OmniDocBench, matching or approaching the performance of much larger systems including Mistral-OCR, DOTS-OCR, and Qwen-VL-235B.

- Complex visual understanding: Outperforms competing models on more challenging prompts involving attributes, comparisons, and dense scenes.

This performance-to-efficiency ratio highlights a broader shift in AI innovation:

Progress is increasingly defined not only by scale, but by architectural refinement and deployability.

Falcon Perception

Benchmarking Intelligence

Where do we stand?

| FEATURE | FALCON PERCEPTION | MOONDREAM3 | QWEN3 | SAM3 |

|---|---|---|---|---|

| Architecture | Early fusion Dense | ViT+Dense | ViT+Dense | DETR |

| Size | 0.6B | 2/9B | 4B/8B | 0.9B |

| Simple Nouns | ||||

| Complex Expressions | ||||

| Segmentation | ||||

| Interactive Refinement | ||||

| Auto-regressive |

* Performance benchmarks based on standardized evaluation metrics

Segmentation Performance

On the SaCO open-vocabulary segmentation benchmark, Falcon Perception achieved 68.0 Macro-F1, compared with 62.3 for SAM 3, with especially strong gains on more complex prompt types and dense scenarios.

The model also performs strongly on PBench, a benchmark introduced to test specific capabilities such as attributes, OCR-guided identification, spatial understanding, relations, and crowded scenes. The largest gains appear as prompts become more demanding, particularly when the task requires reading text in images, understanding spatial constraints, or reasoning about relationships between objects.

Falcon-OCR

Document Understanding

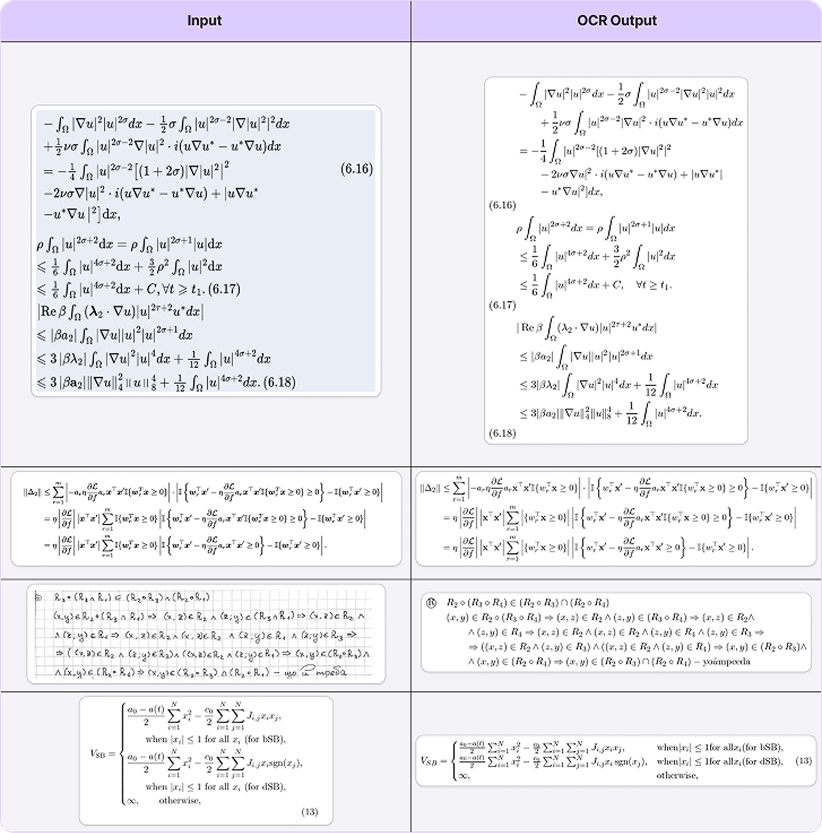

For OCR and document tasks, the Falcon OCR model achieved scores of 80.3 on olmOCR and 88.6 on OmniDocBench, with the highest throughput among open-source OCR models.

Together, these results show that a unified vision-language design can compete with — and in some areas outperform — larger or more specialized systems, while keeping the overall architecture compact and efficient.

Falcon Perception is powerful, efficient, and practical to deploy.

By bringing image understanding, segmentation, and document reading into a more unified architecture, Falcon Perception helps simplify multimodal AI development while expanding what compact models can do.

Falcon OCR

Benchmarking OCR Intelligence

| FEATURE | FALCON OCR | PADDLE | DoisOCR | Qwen3-VL-235B |

|---|---|---|---|---|

| Architecture | Early fusion Dense | ViT+Dense | ViT+Dense | ViT+Dense |

| Size | 0.3B | 0.9B | 2B | 235B |

| Layout Recognition | ||||

| Element Parsing | ||||

| VQA | ||||

| Information Extraction |

* Performance benchmarks based on standardized evaluation metrics